For India’s startup founders, the creation of the AI Governance and Economic Group (AIGEG) is not just another policy headline. It signals the country’s first serious attempt to stitch together a fragmented regulatory landscape where seven ministries issued overlapping advisories with no single authority to resolve conflicts. What looks like an advisory panel is in fact a structural experiment: can India build a central node that balances innovation with liability, and job displacement with digital ambition? The deeper story is whether startups gain breathing space to innovate, or whether the absence of binding law leaves them exposed to sudden regulatory shocks. In this Vishleshan, we decode AIGEG’s mandate, track its implications for India’s AI economy, and assess if this advisory body can evolve into the institutional backbone of real AI legislation.

As India forms AI policy panel, what it means for startups

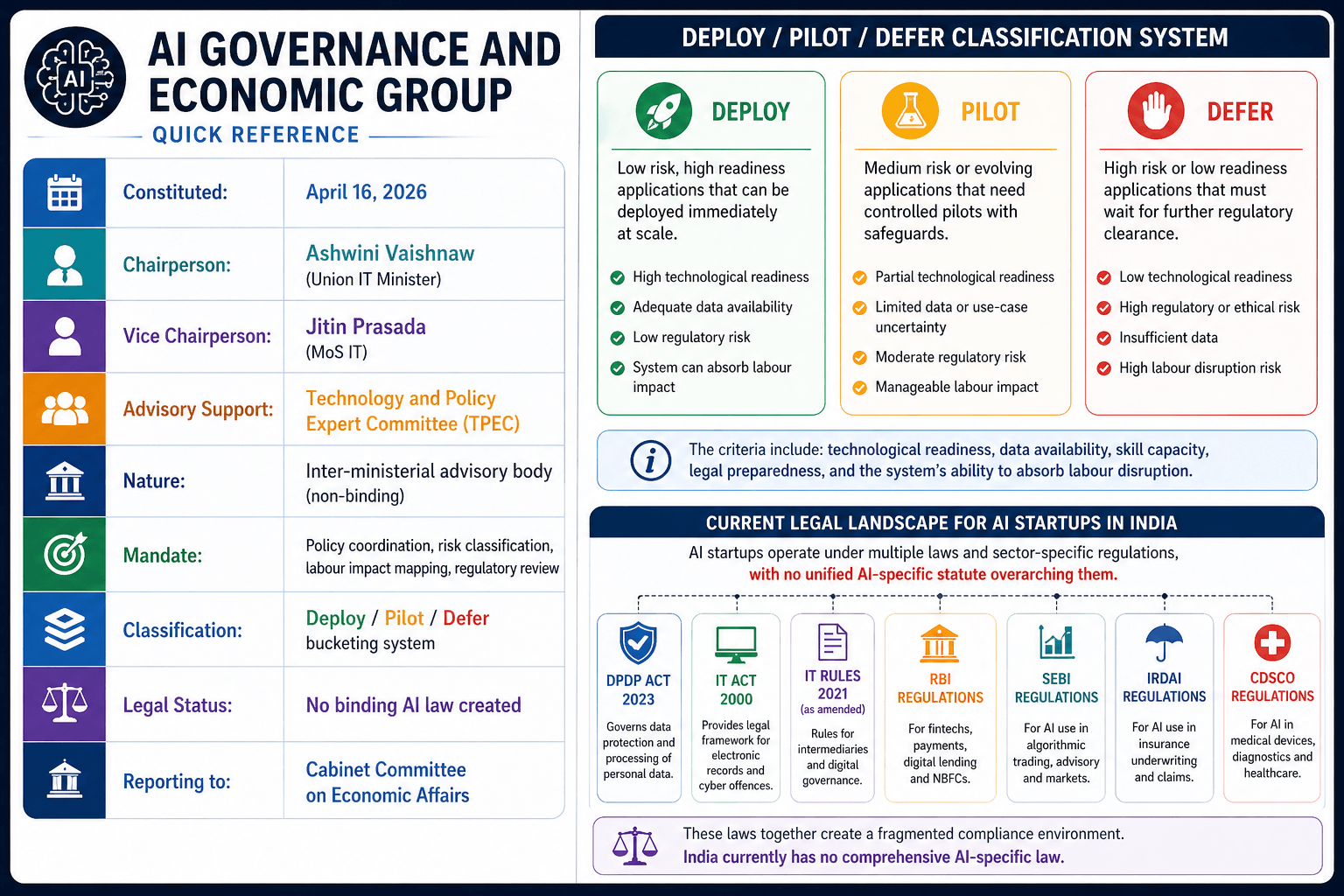

Context: India’s AI startup ecosystem is the third-largest in the world — over 3,000 active AI startups, ₹10,371 crore in public AI investment, and a digital economy projected to hit $1 trillion by 2030. Yet until April 2026, these startups operated in a regulatory vacuum — no statutory definition of an “AI system,” no risk classification framework, no clear liability structure. On April 16, 2026, the government formally constituted the AI Governance and Economic Group (AIGEG) — India’s first apex inter-ministerial body for AI governance — to begin filling that gap. This is not a law. It is the institutional architecture that precedes one. The article asks the right question: Does anything actually change for startups today — and what should they prepare for tomorrow?

Link to the Article: Mint

India’s AI regulatory journey began not with a law but with a principle. In November 2025, MeitY released India’s AI Governance Guidelines — a voluntary, principle-based framework built around seven “Sutras” anchoring safety, transparency, equity, and innovation. These guidelines were aspirational, not enforceable. What was missing was the institutional engine to translate those principles into coordinated policy. AI Governance and Economic Group (AIGEG) fills that gap.

What is it AI Governance and Economic Group?

India’s first apex inter-ministerial advisory body for AI policy — a central coordination node that aligns how ministries, regulators, and industry approach artificial intelligence.

Nature: Advisory. Non-binding. It does not create law, cannot fine companies, and has no licensing authority — yet.

Composition:

- Chairperson: Union IT Minister Ashwini Vaishnaw

- Vice Chairperson: Minister of State Jitin Prasada

- Supported by: Technology and Policy Expert Committee (TPEC) — an expert advisory body studying global AI developments and emerging risks

Why it exists: India’s AI governance was fragmented across at least seven ministries — MeitY, Health, Finance, Labour, Commerce, Education, and Legal Affairs — each issuing sector-specific advisories with no coordination mechanism. A startup building AI for healthcare faced simultaneously the DPDP Act, IT Rules, Clinical Establishments Act, and CDSCO guidelines — with no single authority to resolve conflicts between them. AIGEG is the structural answer to that fragmentation.

What India’s AI Governance Body Actually Controls

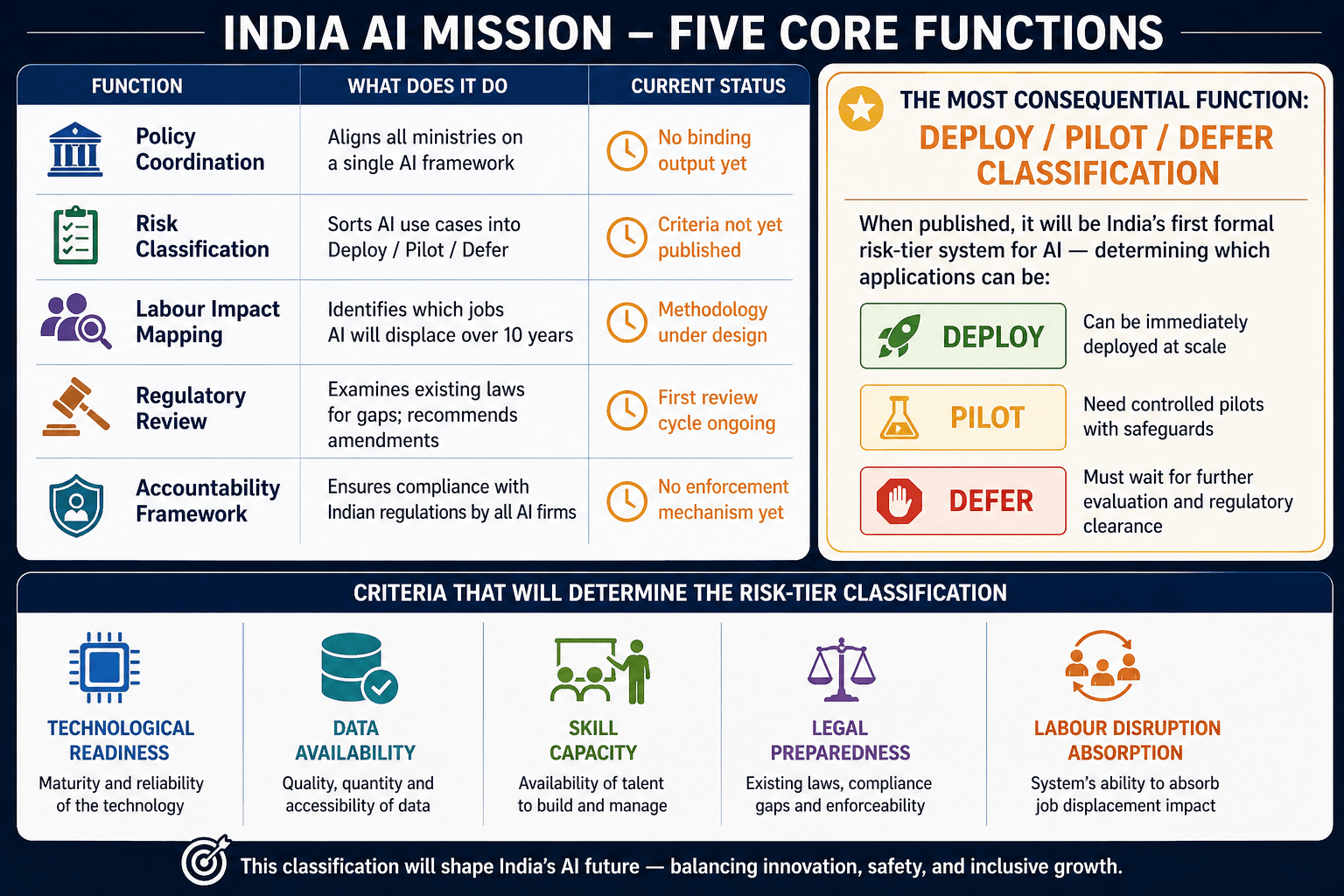

AIGEG’s mandate breaks into five distinct functions:

AIGEG at a Glance

What AIGEG Really Signals — Beyond the Press Release

Layer 1 — The Fragmentation Problem Is Deeper Than It Looks

- The article frames fragmentation as a coordination problem. It is actually a liability vacuum.

- In a typical Indian AI deployment, the model is developed by one company, trained on another’s cloud infrastructure, fine-tuned by a third, and deployed by a fourth. The DPDP Act requires identifying who is the “data fiduciary” and who is the “data processor” — but in this four-party chain, no existing guidance allocates that responsibility.

- AIGEG’s coordination mandate is really about resolving this liability stack before a high-profile AI failure forces a judicial ruling to do it instead. The sharp truth: India’s AI regulation is not slow — it is waiting for a crisis to give it urgency. AIGEG is the attempt to act before that crisis arrives.

Layer 2 — “Deploy/Pilot/Defer” Is India’s Version of the EU Risk Tiers

- The EU AI Act uses four risk categories — Unacceptable, High, Limited, Minimal. India’s three-bucket system is structurally similar but deliberately softer.

- “Deploy” replaces “Minimal Risk,” “Pilot” replaces “Limited/High Risk” in transition, and “Defer” is India’s politically careful substitute for “Prohibited.” The difference is not just semantic.

- The EU’s framework is pre-market — you prove compliance before you launch. India’s framework will likely be post-market — you launch, AIGEG monitors, and classification determines your ongoing obligations.

- For startups, this is a fundamentally friendlier operating environment. But it also means the regulatory shock, when it eventually arrives, will hit live products with live users — not prototypes.

Layer 3 — The Labour Mandate Is the Most Under-Reported Story

- Every headline about AIGEG focuses on startup regulation. The more consequential mandate is the 10-year job displacement mapping.

- India’s Economic Survey has already flagged AI as the most significant structural threat to mid-skill white-collar work in the country’s recent history. Data entry, back-office operations, entry-level legal and financial work, basic customer service — these are the roles most exposed, and they represent millions of formal-sector jobs. AIGEG will produce the first official government estimate of that displacement, sector by sector.

- That number, when published, will do more than inform AI policy. It will shape skilling budgets, influence unemployment frameworks, and affect how Parliament thinks about regulating AI at all.

- A body asked to simultaneously promote AI deployment and protect workers from its consequences will eventually find those two mandates pulling against each other. How AIGEG handles that tension will say more about the character of India’s AI law than any technical classification decision.

Binding Law or Perpetual Advisory?

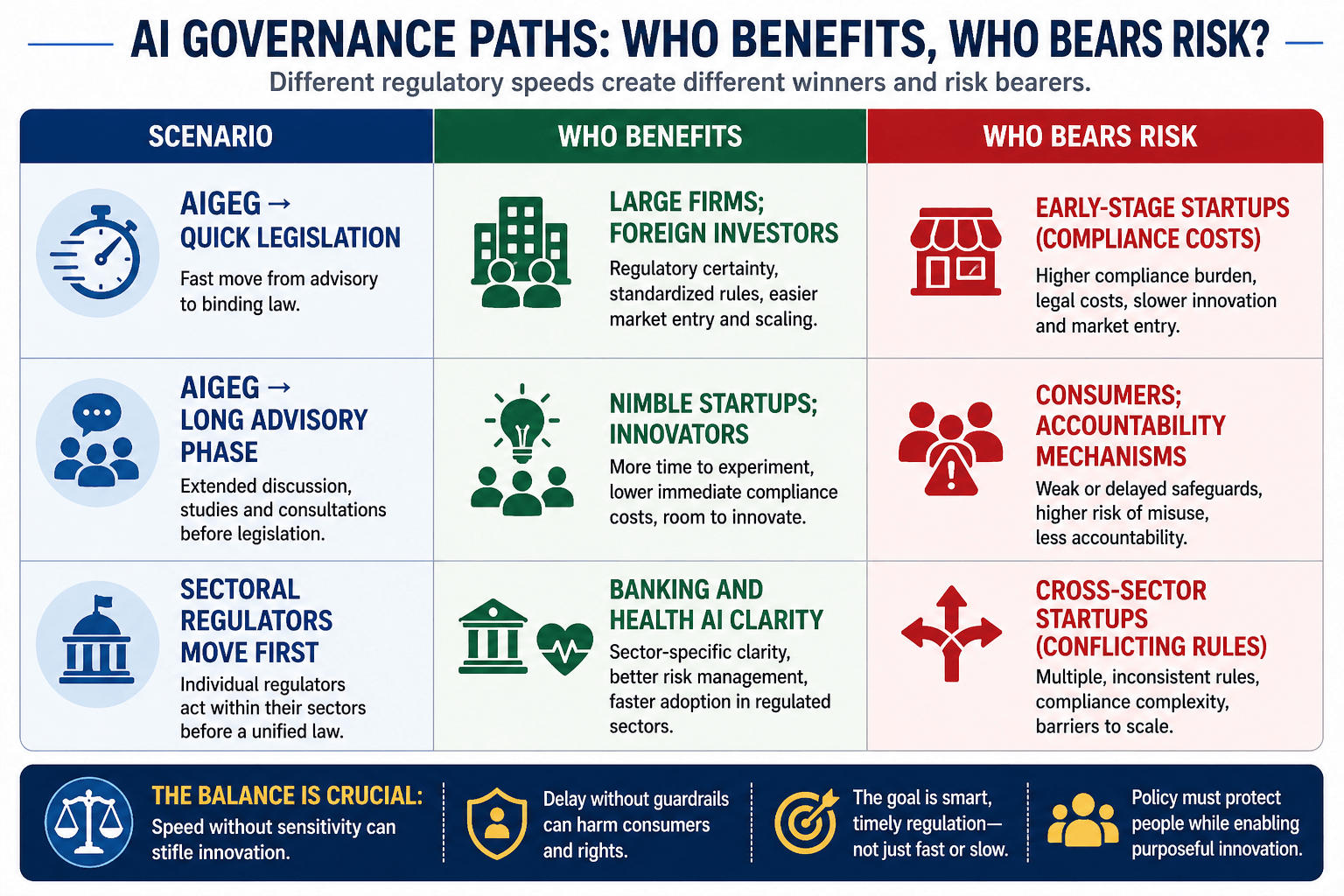

- The real question behind AIGEG is not about classification buckets or liability chains. It is simpler and more fundamental: will any of this actually become law, and if so, when?

- AIGEG can only advise, while the power to legislate rests with the Cabinet. India took five years to move from the 2018 data protection report to the 2023 DPDP Act, but the AI sector and the people affected by it cannot afford such delays.

- The single indicator worth watching most closely is whether RBI and SEBI issue binding AI directions before AIGEG publishes its classification criteria. If sectoral regulators move first, fragmentation does not reduce — it hardens into three separate regulatory languages that a single startup may need to speak simultaneously.

Four Honest Constraints

1. No enforcement, no urgency: AIGEG has no power to fine, license, or sanction. Without an enforcement arm, its classification framework is a recommendation that companies are free to contest or ignore until legislation converts it into law.

2. DPDP Rules are still pending: The DPDP Act was passed in 2023. Its implementing rules — which determine the actual compliance obligations for data fiduciaries — have not been notified as of April 2026. AIGEG cannot resolve AI-specific data liability ambiguity until the foundational rules beneath it are published.

3. Sector regulators may move faster and inconsistently: RBI’s AI-fraud framework, SEBI’s RAIDAR surveillance tool, and IRDAI’s AI underwriting guidelines are all being developed in parallel — without coordination with AIGEG. The result could be three different AI liability standards for a fintech startup offering insurance-linked credit products.

4. Labour mapping and innovation promotion are structurally in tension: A body mandated to protect jobs from AI disruption and simultaneously promote AI innovation will eventually face recommendations that contradict each other. How AIGEG navigates that tension will define the political character of India’s AI law — and that navigation will not be purely technocratic.

What Happens Next — and the One Signal Worth Watching

AIGEG’s first substantive output — the Deploy/Pilot/Defer classification criteria — is the immediate deliverable to watch. If published within 90 days, it signals institutional seriousness. If it slips into FY27 without output, the body risks becoming another consultative process without consequence. Simultaneously, the DPDP Rules notification remains the single most important pending action for AI startups — every month of delay deepens the compliance ambiguity AIGEG is meant to resolve. The EU AI Act’s high-risk provisions come into force in August 2026, creating external pressure on Indian startups with European exposure to build compliance architecture that India’s own law does not yet require. As one policy architect put it privately: India is not behind on AI governance — it is choosing to be last so it can be right. The question is whether “right” arrives before the first major AI-caused harm forces the answer.

- Sign Up on Practicemock for Updated Current Affairs, Topic Tests and Mini Mocks

- Sign Up Here to Download Free Study Material

Free Mock Tests for the Upcoming Exams

- IBPS PO Free Mock Test

- RBI Grade B Free Mock Test

- IBPS SO Free Mock Test

- NABARD Grade A Free Mock Test

- SSC CGL Free Mock Test

- IBPS Clerk Free Mock Test

- IBPS RRB PO Free Mock Test

- IBPS RRB Clerk Free Mock Test

- RRB NTPC Free Mock Test

- SSC MTS Free Mock Test

- SSC Stenographer Free Mock Test

- GATE Mechanical Free Mock Test

- GATE Civil Free Mock Test

- RRB ALP Free Mock Test

- SSC CPO Free Mock Test

- AFCAT Free Mock Test

- SEBI Grade A Free Mock Test

- IFSCA Grade A Free Mock Test

- RRB JE Free Mock Test

- Free Banking Live Test

- Free SSC Live Test